Create Agents Using Reinforcement Learning Designer

The Reinforcement Learning Designer app supports the following types of agents.

To train an agent using Reinforcement Learning Designer, you must first create or import an environment. For more information, see Load MATLAB Environments in Reinforcement Learning Designer and Load Simulink Environments in Reinforcement Learning Designer.

Create Agent

To create an agent, on the Reinforcement Learning tab, in the Agent section, click New.

In the Create agent dialog box, specify the following information.

Agent name — Specify the name of your agent.

Environment — Select an environment that you previously created or imported.

Compatible algorithm — Select an agent training algorithm. This list contains only algorithms that are compatible with the environment you select.

The Reinforcement Learning Designer app creates agents with actors and critics based on default deep neural network. You can specify the following options for the default networks.

Number of hidden units — Specify number of units in each fully-connected or LSTM layer of the actor and critic networks.

Use recurrent neural network — Select this option to create actor and critic with recurrent neural networks that contain an LSTM layer.

To create the agent, click OK.

The app adds the new default agent to the Agents pane and opens a document for editing the agent options.

Import Agent

You can also import an agent from the MATLAB® workspace into Reinforcement Learning Designer. To do so, on the Reinforcement Learning tab, click Import. Then, under Select Agent, select the agent to import.

The app adds the new imported agent to the Agents pane and opens a document for editing the agent options.

Edit Agent Options

In Reinforcement Learning Designer, you can edit agent options in the corresponding agent document.

You can edit the following options for each agent.

Agent Options — Agent options, such as the sample time and discount factor. Specify these options for all supported agent types.

Exploration Model — Exploration model options. PPO agents do not have an exploration model.

Target Policy Smoothing Model — Options for target policy smoothing, which is supported for only TD3 agents.

For more information on these options, see the corresponding agent options object.

rlDQNAgentOptions— DQN agent optionsrlPPOAgentOptions— PPO agent optionsrlTRPOAgentOptions— TRPO agent optionsrlDDPGAgentOptions— DDPG agent optionsrlTD3AgentOptions— TD3 agent optionsrlSACAgentOptions— SAC agent options

Import Agent Options

You can import agent options from the MATLAB workspace. To create options for each type of agent, use one of the preceding objects. You can also import options that you previously exported from the Reinforcement Learning Designer app

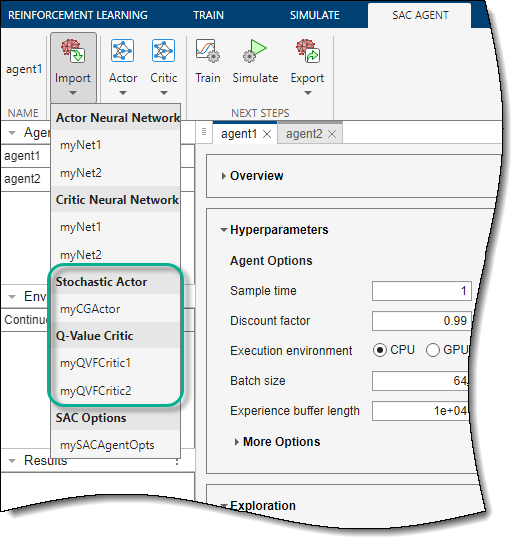

To import the options, on the corresponding Agent tab, click Import. Then, under Options, select an options object. The app lists only compatible options objects from the MATLAB workspace.

The app configures the agent options to match those in the selected options object.

Import Actor and Critic

You can import actors and critics from the MATLAB workspace. You can also import actors and critics that you previously exported from the Reinforcement Learning Designer app.

DQN agents have one critic.

DDPG, PPO and TRPO agents have an actor and a critic.

TD3 and SAC agents have an actor two critics. When you modify the critic options, the changes apply to both critics.

For more information on creating actors and critics, see Create Actors, Critics, and Policy Objects.

To import an actor or critic, on the corresponding Agent tab, click Import. Then, under either Actor or Critic, select an actor or critic object with action and observation specifications that are compatible with the specifications of the agent.

The app replaces the existing actor or critic in the agent with the selected one. If you import a critic for a SAC or TD3 agent, the app replaces the network for both critics.

Edit Actor or Critic Network

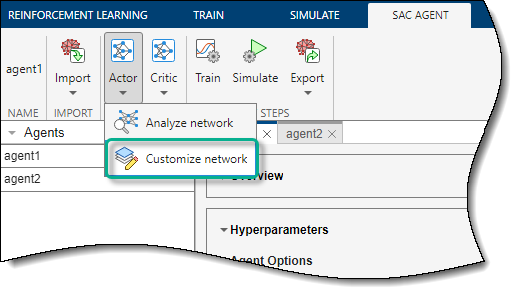

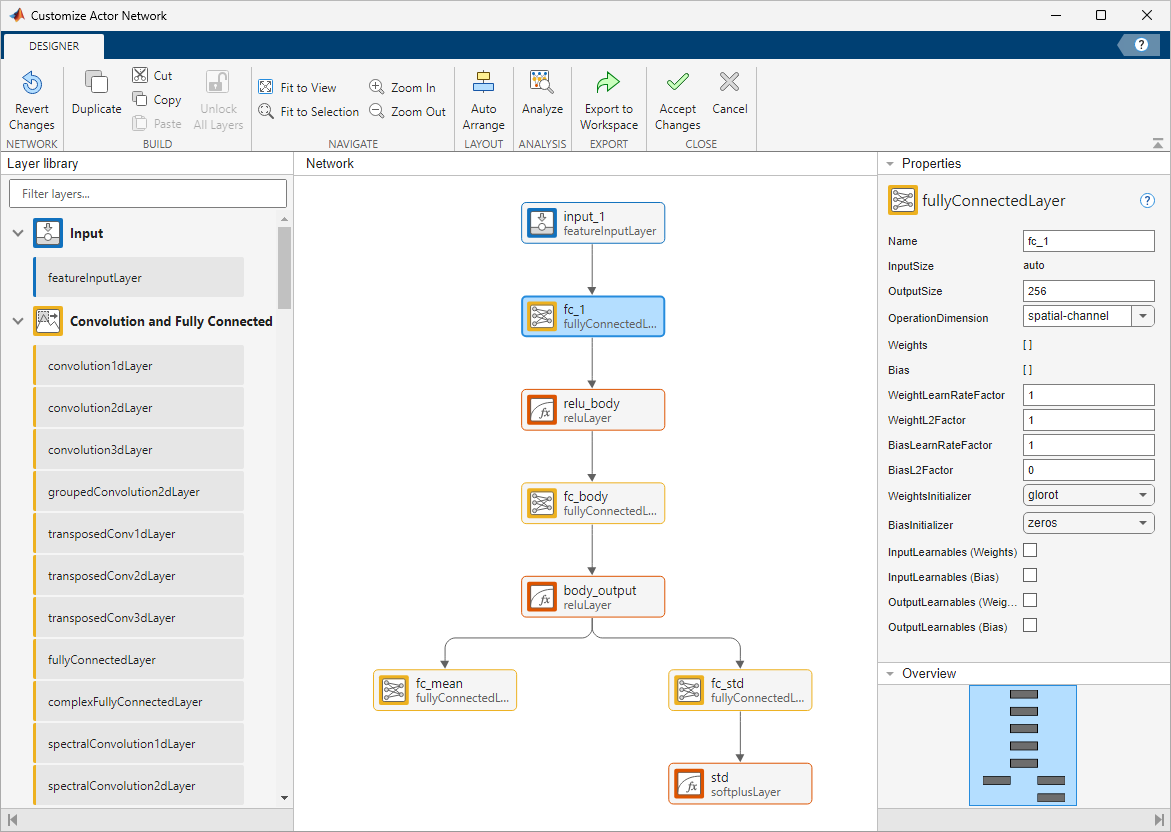

You can modify an actor or critic network, on the corresponding Agent tab, under Actor or Critic, select Customize Network.

The Deep Network Designer opens and displays the network, which you can modify.

Click on Accept Changes to save the network and close the Deep Network Designer. The app then updates the deep neural network in the corresponding actor or critic.

Another strategy to customize your actor or critic network is to export the agent default network to the MATLAB workspace, modify it using the Deep Network Designer app (outside the Reinforcement Learning Designer app), and then import it back into Reinforcement Learning Designer.

For more information on creating deep neural networks for actors and critics, see Create Actors, Critics, and Policy Objects.

Import Actor or Critic Network

You can import an actor or critic network from the MATLAB workspace. To do so, on the corresponding Agent tab, click Import. Then, under either Actor Neural Network or Critic Neural Network, select a network with input and output layers that are compatible with the observation and action specifications of the agent.

The app replaces the deep neural network in the corresponding actor or agent. If you import a critic network for a TD3 agent, the app replaces the network for both critics.

Export Agents and Agent Components

For a given agent, you can export any of the following to the MATLAB workspace.

Agent

Agent options

Actor or critic

Deep neural network in the actor or critic

To export an agent or agent component, on the corresponding Agent tab, click Export. Then, select the item to export.

The app saves a copy of the agent or agent component in the MATLAB workspace.

See Also

Apps

Functions

Topics

- Design and Train Agent Using Reinforcement Learning Designer

- Tune Hyperparameters Using Reinforcement Learning Designer

- Reinforcement Learning Agents

- Load MATLAB Environments in Reinforcement Learning Designer

- Load Simulink Environments in Reinforcement Learning Designer

- Specify Training Options in Reinforcement Learning Designer

- Specify Simulation Options in Reinforcement Learning Designer

- Deep Q-Network (DQN) Agent