Differential Equations and Linear Algebra, 5.4: Independence, Basis, and Dimension

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

Vectors v1 to vd are a basis for a subspace if their combinations span the whole subspace and are independent: no basis vector is a combination of the others. Dimension d = number of basis vectors.

Published: 27 Jan 2016

So as long as I'm introducing the idea of a vector space, I better introduce the things that go with it. The idea of its dimension and, all important, the idea of a basis for that space. That space could be all of three dimensional space, the space we live in. In that, case the dimension is three, but what's the meaning of a basis-- a basis for three dimensional space. Or a basis for other spaces.

OK, so I have to explain independence, basis, and dimension. Dimension's easy if you get the first two. OK, independence. Are those vectors independent? Well, if I draw them, in three dimensional space, I can imagine 2, 1, 5 going in some direction. Let me draw it. How's that? 2, 1, 5, whatever! Goes there. That's a1. OK.

Now is a2 on the same line? If a2 is on the same line then it would be dependent. The two vectors would be dependent if they're on the same line. But this one is not on that line. A 4, 2, 0. So it doesn't go up and all. It's somewhere in this plane, 4, 2, 0. I'll say there. Whatever. a2. So those are independent.

So their combinations give me a space. The combinations of a1 and a2 give me a plane, a flat plane, in three dimensional space. That plane is, I would say, they span the plane. a1 and a2 span a plane. And here's the key word: span.

So there are two vectors. They're in three dimensional space. And the plane they span is all their combinations. That's what we're always doing: taking all the combinations of these vectors. OK.

So there-- and actually, a1 and a2 are a basis for that pane. a1 and a2 are a basis for that plane because their combinations fill the plane. And also, they're independent. I need them both. If I threw away one, I would only have one vector left, and it would only span a line. OK.

Now let me bring in a third vector in three dimensions. Well, what shall I take for that third vector? Ha! Suppose I take a1 plus a2 as my third vector. So 6, 3, 5. What about the vector 6, 3, 5? Well, what do I know? It's obviously special. It's a1 plus a2. It's in the same plane. So if I took a3 equal 6, 3, 5, that would be dependent. The three vectors would be dependent with that a3.

They would span the plane still. Their combinations would still give the plane, but they wouldn't be a basis for the plane. a1 and 12 and a3 together, that's too much, too many vectors for a single plane. The vectors are dependent. And we don't-- a basis has to be independent vectors. You have to need them all. We don't need all three here.

So that's a dependent one. It can't go into a basis with a1 and a2 because the three vectors are dependent. Now let me make a difference choice. So that one's dead. That did not do it. All right.

Let me take a3 equal to some other, not a combination of these, but headed off in some new direction. Well, I don't know what that new direction is. Maybe 1, 0, 0. What the heck? I believe-- I hope I'm right-- that 1, 0, 0 is not a combination here. I say 1, 0, 0 goes off. It's pretty short. Here's a3. Better a3 then that loser 6, 3, 5. 1 0, 0 is a winner. These three vectors--

So now a1, a2, and let me add in a3, all three of them span a-- what do they span? What are all the combinations of a1, a2, a3? It's three dimensional? It's the whole three dimensional space. They span all of 3D, the whole three dimensional space. They're a basis for the whole three dimensional space. They're independent.

So let me-- you see that picture before I move it? a1, a2, a3 are independent. None of them is a combination of the others. They fill a three dimensional space. They're are a basis for that three dimensional space. And that space is, in this example, is the whole of our three.

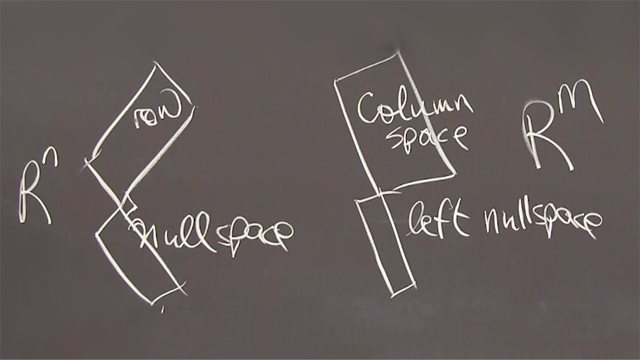

So let me just write down on the next blackboard what I mean. Independent. Independent. So independent columns of a matrix. Independent columns of a matrix A means the only solution to Av equals 0 is v equals 0. So if I have independent columns, then I haven't got any null space. If I have independent columns, then the null space of the matrix is just the 0 vector.

So let me write down that example again. A was the matrix 2, 1, 5, 4, 2, 0, 1, 0, 0. So I believe that matrix has independent columns. So its column space is the full three dimensional space. It's null space only contains-- let me put it, make that clear that that's a vector. And now I'm ready to write down the idea of a basis.

So what is a basis for the space? A basis for a space, a subspace. Independent vectors. That's the key. Independent vectors that span the space, the subspace. Whatever it is.

By the way, if the column space is all a three dimensional space, as it is here, that's a subspace too. It's the whole space, but the whole space counts as a subspace of itself. And the 0 vector alone counts as the smallest possible. So if we're in three dimensions, the idea of subspaces has-- we have just the 0 vector. Just one point. That's a smallest.

We have the whole three dimensional space. That's the biggest. And then we have all the lines through 0. Those are on the small side. We have all the planes through 0. Those are a bit bigger. And those dimensions are 0, 1, 2, 3. The possible dimensions is told to us by how many basis vectors we need.

So let me look at that and then come to dimension. OK. So independent means that the only-- that no combination, no other combination of the vectors, no combination of these vectors gives the 0 vector except to take 0 of that, 0 of that, and 0 of that. So those are a basis for the column space because they're independent and their combinations give the whole column space. OK.

And now I wanted to say something about dimensions. OK. Dimension. It's a number. It's the number of basis vectors for the subspace. Oh! But you might say, that the subspace has other bases, not just the one you happen to think of first. And I agree. Many different bases. For this example, all I need to get a basis for, in this case, for three dimensional space is I need three independent vectors. Any three.

But the point is, the point about dimension is that I need exactly three. I can never get two vectors that span all of our three. And I can never get four vectors that are independent in our three. If I have fewer than the dimension number, I don't have enough. They don't span. If I have too many, than the dimension, they're dependent. They won't be independent. They can't be a basis.

Every basis has the same number. And that number is the dimension of the subspace. All right, let's just take an example, just with a picture. I'll stay in three dimensional space, but my subspace will just be a plane.

So here I'm in three dimensional space. Good. Now I have my subspace is a plane. So it goes through the origin, but it's only a plane. So I'm expecting that I could take a vector in the plane, and I could take another vector in the plane, and they could be independent. They are. They're different directions. I couldn't find a third independent vector in the plane. Every basis for the plane--

So here every basis for this plane contains two vectors. Always two. And that number two is the dimension of a plane. Well, I'm just saying the plane there is two dimensional. It's not the same as r2. it's not the same. That plane is a plane in r3. It's not ordinary two dimensional space. But its dimension is two because it takes any vector. And if I didn't like the looks of this one, well, that's no problem. Let me go that way. That's just as good.

Those two vectors are independent. They span the plane. They're a basis for the plane. The plane is two dimensional. That's the set of key ideas. Independent. Span. Basis. Basis is fundamental. Basis is a bunch of vectors. And dimension is how many vectors.

OK. Those are key ideas in linear algebra. And you'll see them come into the big picture of linear algebra. Thank you.