Differential Equations and Linear Algebra, 6.4b: Similar Matrices, A and B=M^(-1)*A*M

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

A and B are “similar” if B = M-1AM for some matrix M. B then has the same eigenvalues as A.

Published: 27 Jan 2016

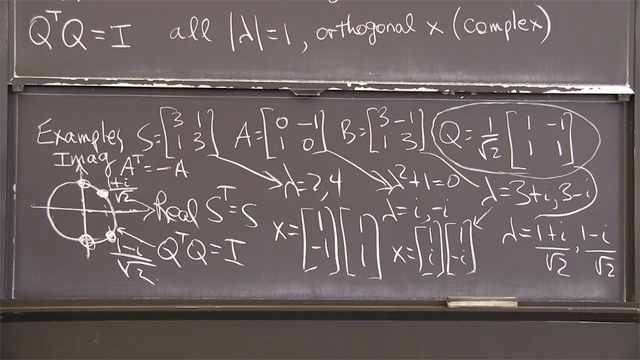

OK, thanks. Here's a second video that involves the matrix exponential. But it has a new idea in it, a basic new idea. And that idea is two matrices being called "similar." So that word "similar" has a specific meaning, that a matrix A, is similar to another matrix B, if B comes from A this way. Notice this way. It means there's some matrix M-- could be any invertible matrix. So that I take A, multiply on the right by M and on the left by M inverse. That'd probably give me a new matrix. Call it B. That matrix is called "similar" to B. I'll show you examples of matrices that are similar. But first is to get this definition in mind.

So in general, a lot of matrices are similar to-- if I have a certain matrix A, I can take any M, and I'll get a similar matrix B. So there are lots of similar matrices. And the point is all those similar matrices have the same eigenvalues. So there's a little family of matrices there, all similar to each other and all with the same eigenvalues. Why do they have the same eigenvalues? I'll just show you, one line.

Suppose B has an eigenvalue of lambda. So B is M inverse AM. So I have this. M inverse AMx is lambda x. That's Bx. B has an eigenvalue of lambda. I want to show that A has an eigenvalue of lambda. OK.

So I look at this. I multiply both sides by M. That cancels this. So when I multiply by M, this is gone, and I have AMx. But the M shows up on the right-hand side, I have lambda Mx. Now I would just look at that, and I say, yes. A has an eigenvector-- Mx with eigenvalue lambda. A times that vector is lambda times that vector. So lambda is an eigenvalue of A. It has a different eigenvector, of course. If matrices have the same eigenvalues and the same eigenvectors, that's the same matrix. But if I do this, allow an M matrix to get in there, that changes the eigenvectors. Here they were originally x for B. And now for A, they're M times x. It does not change the eigenvalues because of this M on both sides allowed me to bring M over to the right-hand side and make that work. OK.

Here are some similar matrices. Let me take some. So these will be all similar. Say 2, 3, 0, 4. OK? That's a matrix A. I can see its eigenvalues are 2 and 4. Well, I know that it will be similar to the diagonal matrix. So there is some matrix M that connects this one with this one, connects this A with that B. Well, that B is really capital lambda. And we know what matrix connects the original A to its eigenvalue matrix. What is the M that does that? It's the eigenvector matrix. So to get this particular-- to get this guy, starting from here, I use M is V for this example to produce that. Then B is lambda.

But there are other possibilities. So let me see. I think probably a matrix is-- there is the matrix, A transpose. Is that similar to A? Is A transpose similar to A? Well, answer-- yes.

The transpose matrix has those same eigenvalues, 2 and 4, and different eigenvectors. And those eigenvectors would connect the original A and this A or that A transpose. So the transpose of a matrix is similar to the matrix.

What about if I change the order? 4, 0, 0, 2. So I've just flipped the 2 and the 4, but of course I haven't changed the eigenvalues. You could find the M that does that. You can find an M so that if I multiply on the right by M and on the left by M inverse, it flips those. So there's another matrix similar. Oh, there could be plenty more.

All I want to do is have the eigenvalues be 4 and 2. Shall I just create some more? Here is a 0, 6. I wanted to get the trace right. 4 plus 2 matches 0 plus 6. Now I have to get the determinant right. That has a determinant of 8. What about a 2 and a minus 4 there? I think I've got the trace correct-- 6. And I've got the determinant correct-- 8. And there the determinant is 8. So that would be a similar matrix. All similar matrices. A family of similar matrices with the eigenvalues 4 and 2.

So I want to do another example of similar matrices. What will be different in this example is there'll be missing eigenvectors. So let me say, 2, 2, 0, 1. So that has eigenvalues 2 and 2 but only one eigenvector.

Here is another matrix like that. Say, so the trace should be 4. The determinant should be 4. So maybe I put a 2 and a minus 2 there. I think that has the correct trace, 4, and the great determinant, also 4. So that will have eigenvalues 2 and 2 and only one eigenvector, so it's similar to this.

Now here's the point. You might say, what about 2, 2, 0, 0. That has the correct eigenvalues, but it's not similar. There's no matrix M that connects that diagonal matrix with these other matrices. That matrix has no missing eigenvectors. These matrices have one missing eigenvector.

What's called the Jordan form. The Jordan form. So that didn't belong. That's not in that family. The Jordan form is-- you could say-- well, that'll be the Jordan form. The most beautiful member of the family is the Jordan form.

So I have a whole lot of matrices that are similar. That is the most beautiful, but it's not in the family. It's related but not in the family. It's not similar to those. And the best one would be this one. So the Jordan form would be that one with the eigenvalues on the diagonal. But because there's a missing eigenvector, there has to be a reason for that. And it's in the 1 there, and I can't have a 0 there. OK. So that's the idea of similar matrices.

And now I do have one more important note, a caution about matrix exponentials. Can I just tell you this caution, this caution?

If I look at e to the A times e to the B. The exponential of A times the exponential of B. My caution is that usually that is not e to the B, e to the A. If I put B and A in the opposite order, I get something different. And it's also not e to the A plus B. Those are all different. Which, if I had 1 by 1, just numbers here, of course, that's the great rule for exponentials. But for matrix exponentials, that rule doesn't work. That is not the same as e to the A plus B. And I can show you why.

e to the A is I plus A plus 1/2 A squared and so on. e to the B is I plus B plus 1/2 B squared and so on. And I do that multiplication. And I get I. And I get an A. And I get a B times I. And now I get 1/2 B squared and an AB and 1/2 A squared. Can I put those down? 1/2 A squared, and there's an A times a B. And there's a 1/2 B squared.

OK. This makes the point. If I multiply the exponentials in this order, I get A times B. What if I multiply them in the other order, in that order? If I multiply e to the B times e to the A, then the B's will be out in front of the A's. And this would become a BA, which can be different.

So already I see that the two are different. Here is e to the A, e to the B. It has A before B. If I do it this way, it'll have B before A. If I do it this way, it'll have a mixture. So e to the A plus B will have a I and an A and a B and a 1/2 A plus B squared. So that'll be 1/2 of A squared plus AB plus BA plus B squared. Different again.

Now I have a sort of symmetric mixture of A and B. In this case, I had A before B. In this case, I had B on the left side of A.

So all three of those are different, even in this term of the series that defines those exponentials. And that means that systems of equations, if the coefficients change over time, are definitely harder. We were able to solve dy dt equals, say, cosine of t times y.

Do you remember how-- that this was solvable for a 1 by 1. We put the exponent-- the solution was y is e to the-- we integrated cosine t and got sine t times y of 0. e to the sine t--

Can I just think of putting that into the differential equation-- its derivative. The derivative of e to the sine t will be e to the sine t. I'm using the chain rule. The derivative of e to the sine t will be e to the sine t again, times the derivative of sine t, which is cos t, so it works. That's fine as a solution.

But if I have matrices here-- if I have matrices, then the whole thing goes wrong. You could say that the chain rule goes wrong. You can't put the integral up there and then take the derivative and expect it to come back down. The chain rule will not work for matrix exponentials, the simple chain rule. And the fact is that we don't have nice formulas for the solutions to linear systems with time-varying coefficients. That has become a harder problem when we went from one equation to a system of an equation.

So this is the caution slide about matrix exponentials. They're beautiful. They work perfectly if you just have one matrix A. But if somehow two matrices are in there or a bunch of different matrices, then you lose the good rules, and you lose the solution.

OK. Thank you.