Differential Equations and Linear Algebra, 8.1b: Examples of Fourier Series

From the series: Differential Equations and Linear Algebra

Gilbert Strang, Massachusetts Institute of Technology (MIT)

Even functions use only cosines (F(–x) = F(x)) and odd functions use only sines. The coefficients an and bn come from integrals of F(x) cos(nx) and F(x) sin(nx).

Published: 27 Jan 2016

This video is to give you more examples of Fourier series. I'll start with a function that's odd. My odd function means that on the left side of 0, I get the negative of what I have on the right side of 0. F at minus x is minus f of x. And it's the sine function that's odd. The cosine function is even, and we will have no cosines here. All the integrals that involve cosines will tell us 0 for the coefficients AN. What we'll get is the B coefficients, the sine functions.

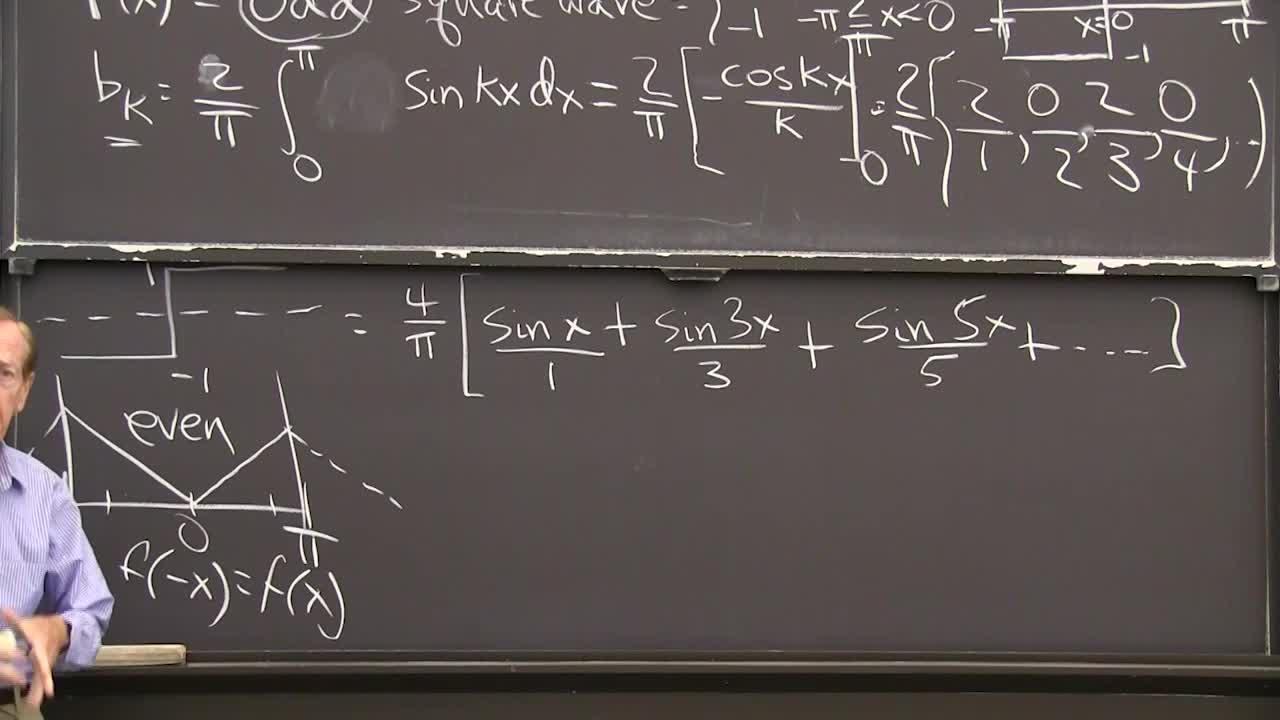

So you see that I chose a simple odd function, minus 1 or 1, which would give a square wave if I continue it on. It will go down, up, down, up in a square wave pattern. And I'm going to express that as a combination of sine functions, smooth waves. And here was the formula from last time for the coefficients bk, except now I'm only integrating over half, over the zero to pi part of the interval, so I double it. So that's an odd function, that's an odd function. When I multiply them, I have an even function. And the integral from minus pi to 0 is just the same as the integral from 0 to pi. So I'll do only 0 to pi and multiply by 2.

But my function on 0 to pi is 1. My nice square wave is just plus 1 there, so I'm just integrating sine kx dx. We can do this. It's minus cosine kx divided by k, right? That's the integral with the 2 over pi factor. Now I have to put in pi and 0 and put in the limits of integration and get the answer. So what do I get? I get 2 over pi.

For k equal 1, I think I get-- so k is 1, the denominator will be 1, and I think the numerator is 2. Yes, when k is 0, I get yeah. When k is 1, I get 2. When k is 2, so this is 4 over pi, I figured out as the first coefficient. The coefficient b1 is 4 over pi. The coefficient b2, now if I take k equal to 2, I have a 2 down below. But above, I have a 0 because the cosine of 2 pi is the same as the cosine of 0. When I subtract I get nothing, so that's 0.

Now I go to k equals 3. So the k equals 3 will come down here. And now when k is 3, it turns out I get-- they don't cancel, they reinforce. I get another 2. Good if you do these. And when k is 4, I get a 0 again. You see the pattern?

The pattern for the integrals is the k is going 1, 2, 3, 4, 5. This part gives me a 2 or a 0 or a 2 or a 0 in order. If you check that, you'll get it.

So I see that now for this function, which is better than the delta function also. It's not very smooth. It has jumps. It's a jump function, a step function. I see some decay, some slow decay, in the Fourier coefficients. This factor k is growing so the numbers are going to 0, but not very fast. Not very fast. Because my function is not very smooth.

So now you see-- so if I use those numbers, I'm saying that the square wave, this function, the minus 1 to 1 function, is equal to, let's see. I might as well take that 4 over pi times 1. So that's 1 sine x, 0, sine 2x's then 4 over pi sine 3x's, but with this guy there's a 3, 0 sine 4x's, sine 5x comes in over 5, and so on.

That's a kind of nice example. It turns out that we have just the odd frequencies 1, 3, 5 in the square wave and they're multiplied by 4 over pi and they're divided by the frequency, so that's the decay.

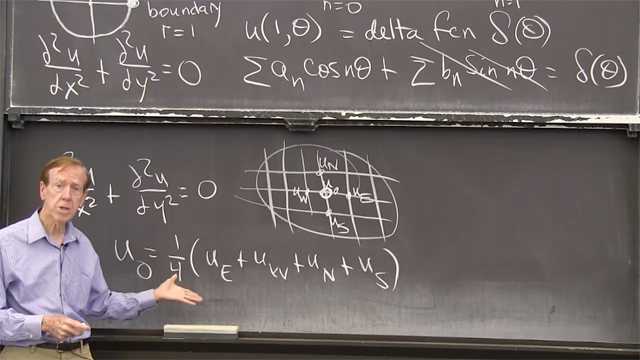

There is an odd function. Why don't I integrate that function? If I want to get an even function to show you an even example, I'll just integrate that square wave. When I integrate it square wave, it'll be even. Maybe I'll start the integral at 0, then it goes up at 1. And here the integral is negative, so it's coming down.

So you see it's a-- what am I going to call this function? Sort of a repeating ramp function. It's a ramp down and then up, down and then up. But of course from minus pi to pi, that's where I'm looking. I'm looking between minus pi and pi. And I see that function is even. And what does even mean? That means that my function at minus-- there is minus x-- is the same as the value at x.

And what that means for a Fourier series is cosine. Even functions only have cosine terms. And of course, since I've just integrated, I might as well just integrate that series. So this is this ramp, this repeating ramp function, is going to be 4 over pi. I could figure out the cosine coefficients, the a's, patiently. But why should I do that when I can just integrate?

So the integral of sine x will be minus is the integral of sine x, is minus cosine x, so I'll put the minus there, cosine x over 1 I guess. Now what's the integral of this? The integral of sine 3x is a cosine 3x over 3. And there's another 3 and there's a minus sign, which I've got. So I think it's cosine of 3x over 3 squared, because I have one 3 there and I get another 3 from the integration. And similarly here, when I integrate sine 5x I get cos 5x with a 5. And then I already had one 5, so 5 squared. So there you go.

[LAUGHTER]

There's something in freshman calculus which I totally forgot, the constant term. So there is a constant term, the average value, that a0. I've only found the a1, 2, 3, 4, 5. I haven't found the a0, and that would be the average of that. I don't know, what's the average of this function? Its goes from 0 up to pi and it seems like it's pretty-- I didn't draw it well, but half way. I think probably its average is about pi over 2, right? Let's hope that's right.

So let me sneak in the constant term here. The ramp is, I think I have a constant term is pi over 2. That's the average value. It would come from the formula and those-- well, what do you see now? That's the other example I wanted you to see. You see a faster drop off. 1, 9, 25, 49, whatever. It's dropping off with k squared.

And the reason it drops off faster than this one is that it's smoother. This function has corners. This function has jumps. So a jump is one level more rough, more word noisy than a ramp function. The smoother function has faster decay. Smooth-- let me write those words-- smooth function connects with faster decay. Faster drop off of the Fourier coefficient.

It means that the Fourier series is much more useful. Fourier series is really terrific for functions that are smooth because then you only need to keep a few terms. For functions that have jumps or delta functions, you have to keep many, many terms and the Fourier series calculation is much more difficult.

So that's the second example. Let's see, what more shall I say? We learned something about integrating and taking the derivative so let me end with just two basic rules. Two basic rules.

So the rule for derivatives. What's the Fourier series of df dx? And the second will be the rule for shift. What's the Fourier series for f of x minus a shift? You know that when I change x to x minus d, all that does is shift the graph by a distance d. That should do something nice to its Fourier coefficient.

So I'm starting with-- oh, I haven't given you any practice with a complex case. This would be a good time. Suppose start is f of x equals the sum of ck, a complex coefficient e to the ikx, the complex exponential. And you'll remember that sum went from minus infinity to infinity.

So I have a Fourier series. I'm imagining I know the coefficients and I want to say, what happens if I take the derivative? Well, just take the derivative. You'll have a sum of the derivative brings down a factor ik. So that's the rule. Simple, but important. That's why Fourier series is so great because you have orthogonality and then you have this simple rule with derivatives. it just brings a factor ik so the derivative make sure function noisier and you have larger coefficients.

And if I do f of x minus d, so I'll change x to x minus d, so I'll see the sum of ck e to the ikx, e to the minus ikd, right? I've put in x minus d instead of x. And here I see that the Fourier coefficient for a shifted function-- so the ck was a Fourier coefficient for f. When I shift f, it multiplies that coefficient by a phase change. The magnitude stayed the same because that's a number-- everybody recognizes that as a number of magnitude 1 and just has a phase shift. Those are two would rules that show why you can use Fourier series in differential equations and in difference equations. Thank you.