Training and Prediction in MATLAB and Simulink Implementation | How to Estimate Battery State of Charge Using Deep Learning, Part 4

From the series: How to Estimate Battery State of Charge Using Deep Learning

Walk through the steps of training the neural network with voltage, current, and temperature measurements and SOC as a response.

Once the neural network is working properly, use it inside a Simulink block to streamline its implementation inside a Simulink model of the entire BMS.

Published: 27 May 2021

Now, I'll walk you through the MATLAB script that does the training and testing of the network. The first thing is to create data stores to contain the training and testing data. Data stores are a MATLAB constructs that allow us to store large amounts of data, even larger than the memory of the computer. Separate data stores are used to contain inputs, or in machine learning terms, predictors, and outputs, also known as responses data.

Next, we combine all training data sets into one structure. Depending on the size of the training data, it may be necessary to split it into many batches. This is not the case here, so we keep that equal to one. The validation data set is set to be the same as the testing data set, but this can be changed.

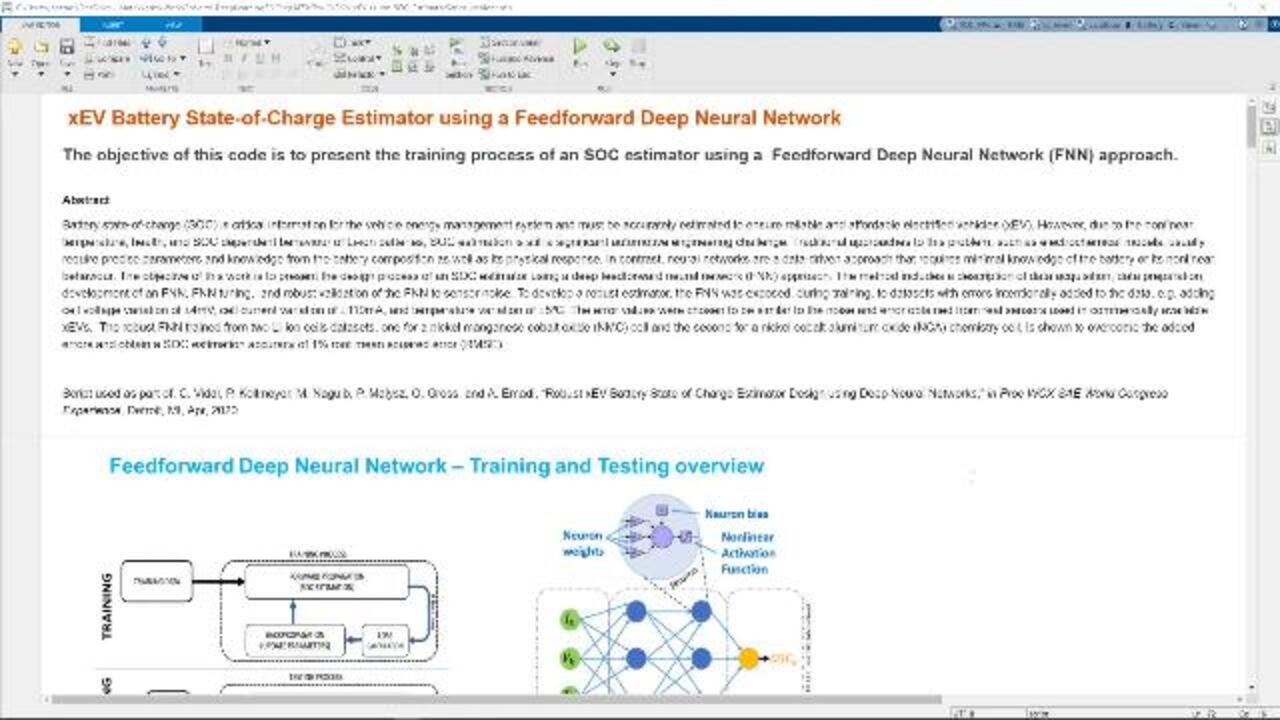

Now, it is time to define the network architecture. As explained earlier by Carlos, the system will have five predictors-- voltage, current, temperature, and average voltage, and average current, and one response, the state of charge.

In this section, we set a number of hidden units, the number of iterations, or epochs, the number of epochs per dropping the learning rates, the initial learning rate, drop factor, and validation frequency. Last, because we're going to train the network with a different parameter seed every time, we will repeat the training process five times and keep the trained network with the smallest error.

Once the neural network is properly designed and configured, we can proceed to its training. The results published by the works' authors correspond to 5,100 training iterations. For the purpose of today's discussion, I'll show much fewer epochs, both using a CPU with my laptop computer and a GPU on a lease machine in our headquarter in Natick.

This is what MATLAB shows when we started training. Based on the time it takes to complete each iteration, we can estimate that 5,100 iterations times 5 repeats would take about 20 hours to complete the task. Let's compare this against training using a GPU. When we use a GPU, it takes about one minute to complete the 100 epochs. Which extrapolated to 51 times 5 repeats, we obtain 4.25 hours, roughly a five times speed up with respect to using a CPU.

Now, let's go back to the results of the 5,100 epochs that we had saved. Once the neural network is trained, we can test it against experimental data that the network never saw before. The root mean square error is quite low, between 1% and 2%, depending on the temperature. The maximum error for the testing data is shown here, and it is larger at low temperatures, as expected. These are the neural network predictions for SOC at different temperatures. There's excellent agreement between prediction and experiment.

OK, so that was the MATLAB part. Carlos and Phil, do you have anything to add?

Thank you, Javier. I would just like to add that the script is also shared online in the Mandalay data repository, and a link is included at the end of the presentation.

We are now ready to deploy the trained network in Simulink. This is useful, if we already have an established workflow for BMS design in Simulink. As described in past webinars, Simulink provides a

natural path for BMS algorithm development and automated code generation. Starting from MATLAB release 2020B, Simulink offers a block called Predict, with the functionality of a trained deep neural network. This will allow us to include a SOC estimation feature into a BMS model.

When we trained the network in MATLAB, we stored the network parameters and architecture in a network object, that we call a net. We can now use this net object directly in Simulink, insight that predict block. Doing so, the block will accept an input signal with a dimensionality of the input layer of the neural network, and will output the prediction. Let's reproduce in Simulink the results we obtained in MATLAB, running the Simulink model. The network performs very well, much faster than real time, and it is now in the form that can be utilized directly in a BMS model, developed in Simulink.

OK, let's now summarize what we've shared with you in this event. We began with a problem statements, the importance and challenges associated with battery state of charge estimation. As an alternative to the state of the art techniques, we presented the work and results of a team at McMaster University on the use of MATLAB and deep learning toolbox, to create a feed-forward neural network for the estimation of state of charge.

Using data from two different cell chemistries and multiple temperatures, that neural network could predict state of charge within 2% accuracy. Finally, we showed how to deploy the trained network in Simulink, using a new block called Predict. Thank you very much to my guests, and thank you all for kindly sharing your time with us today. See you next time.