Results for

I have been a loyal MATLAB user for 25 years, starting from my university days. While many of my peers migrated to Python, I stayed for the stability, compatibility, and clean environment. However, I am finding the 2025 version exceptionally laggy. Despite running it on an $10k high-end machine, simple tasks like viewing variables and plotting take up to 60 seconds - actions that were near instantaneous in the 2020 version. I want to stay continue with MATLAB, but this performance gap is a major hurdle and irritation. I hope these optimization issues can be addressed quickly.

Short version: MathWorks have released the MATLAB Agentic Toolkit which will significantly improve the life of anyone who is using MATLAB and Simulink with agentic AI systems such as Claude Code or OpenAI Codex. Go and get it from here: https://github.com/matlab/matlab-agentic-toolkit

Long version: On The MATLAB Blog Introducing the MATLAB Agentic Toolkit » The MATLAB Blog - MATLAB & Simulink

MATLAB EXPO India | 7 May | Bengaluru

Get inspired by the latest trends and real-world customer success stories transforming industries. Learn from trusted experts across 4 tracks.

- AI & Autonomous Systems

- Electrification

- Systems & Software Engineering

- Radar, Wireless & HDL

Register at bit.ly/matlabexpocommunity

Digital Twin Development of PEARL Autonomous Surface System Thermal Management

The top session of the countdown showcases how the PEARL engineering team used a digital twin to solve real‑world thermal challenges in a solar‑powered autonomous marine platform operating in extreme environments. After thermal shutdown events in the field, the team built a model that predicts temperatures at multiple locations with ~1% accuracy, while balancing accuracy with model complexity.

Beyond the technology, this keynote delivers practical lessons for predictive modeling and digital twins that apply well beyond marine systems.

We hope you’ve enjoyed the Top 10 countdown series—and a big thank‑you to Olivier de Weck at Massachusetts Institute of Technology, for delivering such a compelling and insightful keynote.

🎥 If you missed it live, be sure to watch the recording to see why it earned the #1 spot at MATLAB EXPO 2026.

MATLAB EXPO India is Back!

This in-person events brings together engineers, scientists, and researchers to explore the latest trends in engineering and science, and discover new MATLAB and Simulink capabilities to apply to your work.

May 7, 2026 l Bengaluru

Register at bit.ly/matlabexpocommunity

It’s no surprise this keynote landed at #2. MaryAnn Freeman, Senior Director of Engineering, AI, and Data Science explores how AI, especially generative AI, is transforming the way engineers design, build, and innovate. From accelerating the design loop with faster, data‑driven solutions, to blending human creativity with AI insights, to evolving engineering tools that turn ideas into build‑ready systems. This keynote shows how embedded intelligence helps engineers push past traditional limits and bridge imagination with real‑world impact.

If you’re curious about how AI is reshaping engineering workflows today (and what that means for the future of design), this is a must‑watch.

👉 Watch the keynote recording and see why it was one of the most popular sessions of MATLAB EXPO Online 2025.

PLEASE, PLEASE, PLEASE... make MATLAB Copilot available as an option with a home license.

Please change the documentation window (https://www.mathworks.com/help/index.html) so I don't have to first click a magnifying glass before I can to get to a text field to enter my search term.

Software‑defined vehicles are becoming reality—and this #3 ranked session shows how. In this keynote, Daniel Scurtu (NXP) demonstrates how MathWorks and NXP are working together to accelerate system‑level embedded development.

🔋 Using a vehicle electrification demo that runs across multiple NXP processors, you’ll see:

- Model‑Based Design workflows from concept to deployment

- Intelligent battery management and motor control

- Automatic code generation and hardware deployment

- ☁️ Real‑time cloud analytics and over‑the‑air updates

🛠️ Featuring MATLAB and Simulink products alongside NXP tools like Model-based Design Toolbox (MBDT), S32 Design Studio IDE, and Real-Time Drivers (RTD), this session highlights an end‑to‑end approach that reduces complexity and speeds the transition to software‑defined vehicles.

Hi everyone,

Some of you may remember my earlier post. Quick version: I'm a biomed PhD student, I use MATLAB daily, and I noticed that AI coding tools often suggest functions that don't exist in R2025b or use deprecated ones. So I built skills that teach them what actually works.

v2.0 adds 54 template `.m` scripts, rewrites all knowledge cards based on blind testing, and verifies every function call against live MATLAB. I tested each skill on 17 prompts and caught 8 hallucinated functions across 5 toolboxes (Medical Imaging, Deep Learning, Image Processing, Stats-ML, Wavelet).

Give it a spin!

Repo: matlab-toolbox-skills

The skills follow the Agent Skills open standard, so they also work with Codex, Gemini CLI, Claude Code and others. If you use the official Matlab MCP Server from MathWorks, these skills complement it: the MCP server executes your code, the skills help the AI write good code to begin with.

One ask

How do we measure performance and evaluate agent skills? We can run blind tests and catch hallucinated functions, but that only covers what we thought to test. The honest answer is that the best way to evaluate these is community consensus and real-world testimonials. How are you using them? What worked? What still broke?

Your use cases and feedback are the most reliable eval I can get, and as a student building this, they're also the real motivation for me to keep going. If a skill saved you from a hallucinated function or pointed you to the right function call, I'd love to hear about it. If something is still wrong, I need to hear about it.

Issues, PRs, or just a reply here. Star the repo if it saved you time.

Thanks!

Happy Spring! and Happy Coding in Matlab!

Best,

Ritish

Dear all,

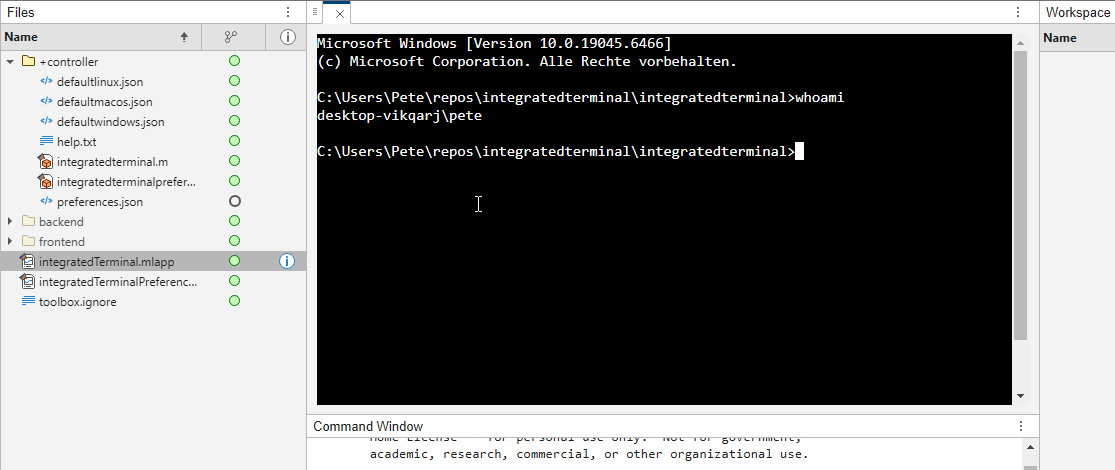

Recently I started working on a VS Code-style integrated terminal for the MATLAB IDE.

The terminal is installed as an app and runs inside a docked figure. You can launch the terminal by clicking on the app icon, running the command integratedTerminal or via keyboard shortcut.

It's possible to change the shell which is used. For example, I can set the shell path to C://Git//bin//bash.exe and use Git Bash on Windows. You can also change the theme. You can run multiple terminals.

I hope you like it and any feedback will be much appreciated. As soon as it's stable enough I can release it as a toolbox.

What’s New in MATLAB and Simulink in 2025

If you missed this session live, this is one of those “everyone’s talking about it” updates you’ll want to catch up on. 👀

This session is packed with the kinds of enhancements that quietly (and not so quietly) change how you work every day.

Here’s why it earned a spot in our Top 4:

- A redesigned MATLAB desktop with customizable sidebars and light/dark themes—built to adapt to how you work

- New side panels for coding and development tasks, plus more control over organizing and customizing figures

- MATLAB Copilot, a generative AI assistant optimized for MATLAB to help you explore ideas, learn techniques, and boost productivity directly in the desktop

- Simulink workflow improvements like a redesigned Simulink scope, more detailed info in quick insert, and automatic signal line straightening

- Enhanced Python integration across MATLAB and Simulink

- New AI deployment options optimized for Qualcomm and Infineon hardware targets

If staying current with MATLAB and Simulink is part of your role—or your edge—this session is a must‑watch. Missing it means missing context for features that will shape how you work in 2026 and beyond.

💬 Discussion topic:

Which single update from this release do you think will most improve your day‑to‑day workflow, and why?

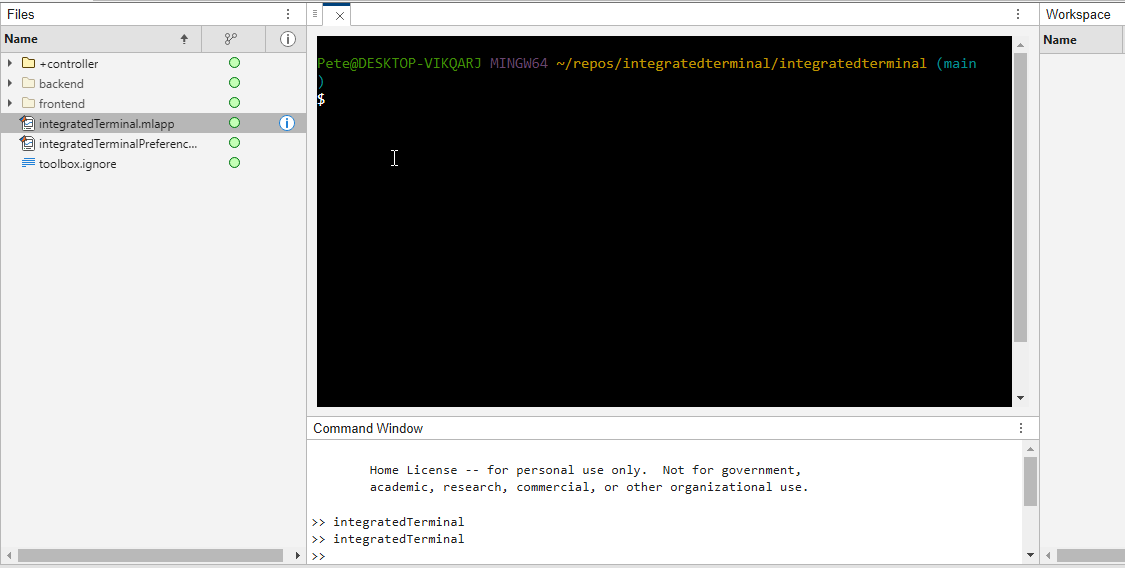

A Live Script can be converted to an HTML5 framework web application with AI as described in Double Pendulum Chaos Explorer: From HTML5 Prototype to MATLAB interactive application with AI. I have recently provides converted the Live Script Classical Stark Effect to a web application supporting a 3D twirlable display of motion of a particle subject to an inverse square law force plus an additional constant force - the problem known as the classical Stark effect.

The web application deployed to GitHub may be launched here and documents its dependencies below the interactive application. The files are available at Classical Stark Effect — Interactive Web Simulation. One gotcha was the need to enable hardware acceleration in Chrome (no problem in Safari) to support a 3D twirlable display. If hardware acceleration is disabled in Chrome, the application provides a warning and replaces the 3D twirlable display with a 2D alternate.

The conversion of the script to a web application was performed with Perplexity.ai. The GitHub deployment was accomplished with Anthropic's Claude using the open source GitHub CLI. WIth the gh CLI (already installed and authenticated on my Mac) via osascript, and Claude connected to my file system via MCP and an ngrok server, Claude executed on my Mac the following sequence of steps:

1. git init

Creates a hidden .git/ directory in the staging folder, initializing it as a local git repository. Before this command the folder is just a plain directory; after it, git can track files there. Run once per new project.

2. git branch -M main

Renames the default branch to main. Older git versions default to master; GitHub now expects main. The -M flag forces the rename even if main already exists. Must run after git init and before the first commit.

3. git add -A

Stages all files in the directory tree for the next commit. The -A flag means "all" -- new files, modified files, and deleted files are all included. This does not write anything to GitHub; it only updates git's internal index (the staging area) on your local machine.

4. git commit -m 'Initial release: Classical Stark Effect Interactive Simulation'

Takes everything in the staging area and freezes it into a permanent commit object stored in .git/. This is the snapshot that will be pushed. The -m flag provides the commit message inline. After this command, git knows exactly what files exist and what their contents are -- gh repo create --push will send exactly this snapshot.

5. gh repo create ClassicalStarkEffect --public --source=. --push

Three things happen in sequence inside this one command:

- gh repo create ClassicalStarkEffect --public -- calls the GitHub API to create a new empty public repository named ClassicalStarkEffect under the authenticated account (DuncanCarlsmith).

- --source=. -- tells gh to treat the current directory as the local git repo. It reads .git/ to find the commits and configures the remote.

- --push -- sets the new GitHub repo as origin and runs the equivalent of git push origin main, sending the commit from step 4 up to GitHub.

Without steps 1-4 having run first, --push would have nothing to send and the repo would land empty.

6. gh api repos/DuncanCarlsmith/ClassicalStarkEffect/pages --method POST -f build_type=legacy -f source[branch]=main -f 'source[path]=/'

Calls the GitHub REST API directly to enable GitHub Pages on the repo. Breaking down the flags:

- --method POST -- this is a create operation (not a read), so it uses HTTP POST.

- -f build_type=legacy -- critical flag. Tells GitHub to serve files directly from the branch. The alternative (workflow) would expect a .github/workflows/ Actions file to build and deploy the site, which doesn't exist here, and would produce a permanent 404.

- -f source[branch]=main -- serve from the main branch.

- -f 'source[path]=/' -- serve from the root of the branch (as opposed to a /docs subdirectory).

This is the API equivalent of going to Settings > Pages in the GitHub web UI and setting Branch: main, Folder: / (root), clicking Save.

7. curl -s -o /dev/null -w "%{http_code}" https://duncancarlsmith.github.io/ClassicalStarkEffect/

Not a git or gh command, but the verification step. GitHub Pages takes ~60 seconds to build after step 6. This curl fetches the live URL and prints only the HTTP status code (-w "%{http_code}"), discarding the body (-o /dev/null) and suppressing progress output (-s). 200 means live; 404 means still building.

🤖 What does it take to make robotic motion feel… human?

In this session, Tetsushi Sotowa shares how NSK is combining advanced control techniques with deep learning to enable human‑like grasping in electric grippers

You’ll see a real‑world case study featuring:

- Bilateral and force control systems developed in‑house

- MATLAB and Simulink–based control workflows

- Deep learning integration using Deep Learning Toolbox

- A practical path from mechatronics research to intelligent actuation

The result: an AI‑enhanced actuator capable of more natural, responsive grasping—bringing robotics one step closer to human motion.

👉 Interested in AI‑driven robotics and advanced control? Check out the session now from MATLAB EXPO 2025.

MATLAB MCP Core Server v0.6.0 has been released onGitHub: https://github.com/matlab/matlab-mcp-core-server/releases/tag/v0.6.0

Release highlights:

- New cross-platform MCP Bundle; one-click installation in Claude Desktop

Enhancements:

- Provide structured output from check_matlab_code and additional information for MATLAB R2022b onwards

- Made project_path optional in evaluate_matlab_code tool for simpler tool calls

- Enhanced detect_matlab_toolboxes output to include product version

Bug fixes:

- Updated MCP Go SDK dependency to address CVE.

We encourage you to try this repository and provide feedback. If you encounter a technical issue or have an enhancement request, create an issue https://github.com/matlab/matlab-mcp-core-server/issues

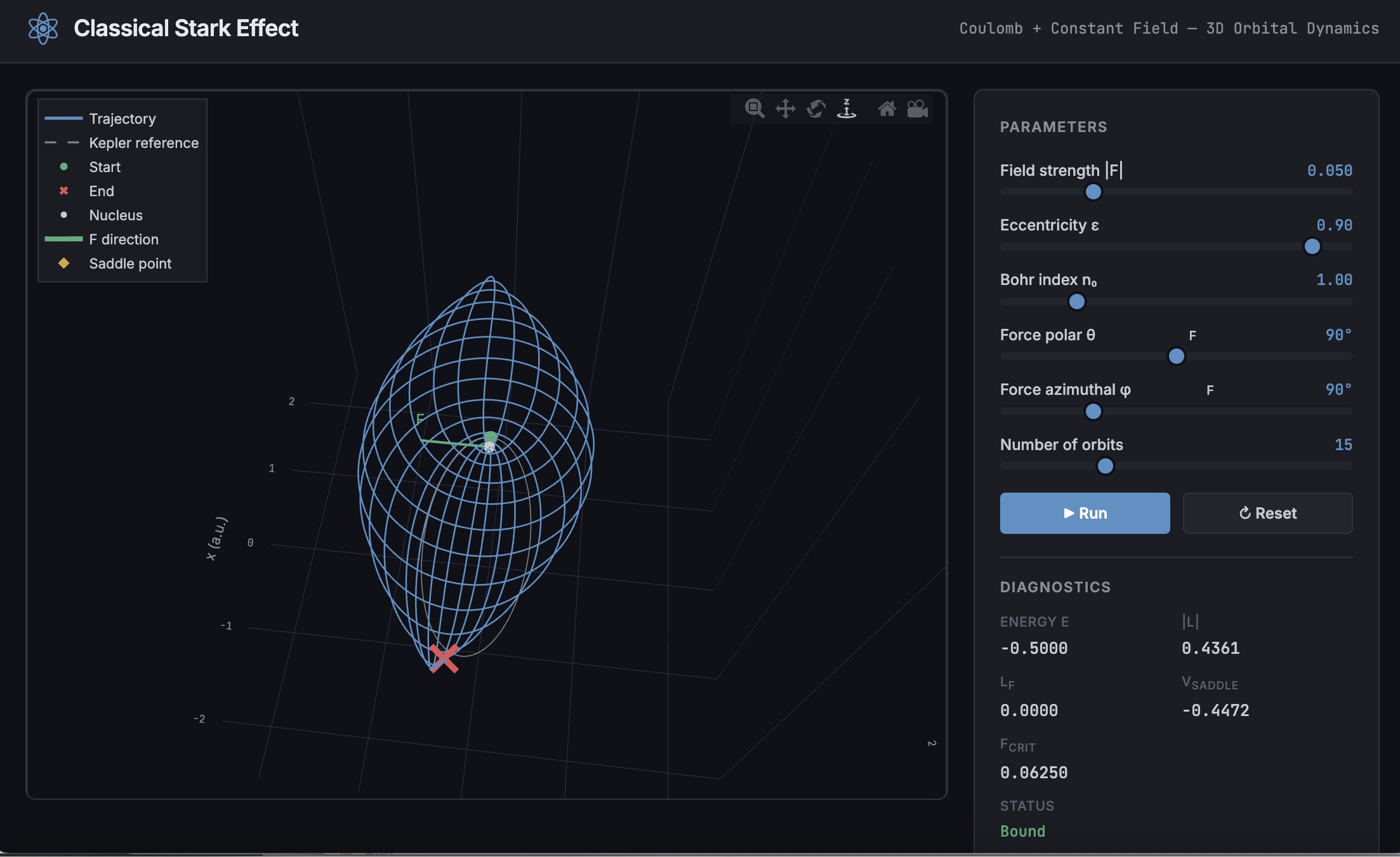

Missed a crowd‑favorite session feautring Marko Gecic at Infineon and Lucas Garcia at MathWorks?

This talk shows how to verify and test AI for real‑time, safety‑critical systems using an AI virtual sensor that estimates motor rotor position on an Infineon AURIX TC4x microcontroller. Built with MATLAB and Simulink, the demo covers training, verification, and real‑time control across a wide range of operating conditions.

You’ll see practical techniques to test robustness, measure sensitivity to input perturbations, and detect out‑of‑distribution behavior—critical steps for meeting standards like ISO 26262 and ISO 8800. The session also highlights how Model‑Based Design leverages AURIX TC4x features such as the PPU and CDSP to deploy AI with confidence.

Featuring: Dr. Arthur Clavière, Collins Aerospace

How can we be confident that a machine learning model will behave safely on data it’s never seen—especially in avionics? In this session, Dr. Arthur Clavière introduces a formal methods approach to verifying maching learning generalization. The talk highlights how formal verification can be apploied toneural networks in safety-critical avionics systems.

💬 Discussion question:

Where do you see formal verification having the biggest impact on deploying ML in safety‑critical systems—and what challenges still stand in the way?

Join the conversation below 👇

I was reading Yann Debray's recent post on automating documentation with agentic AI and ended up spending more time than expected in the comments section. Not because of the comments themselves, but because of something small I noticed while trying to write one. There is no writing assistance of any kind before you post. You type, you submit, and whatever you wrote is live.

For a lot of people that is fine. But MATLAB Central has users from all over the world, and I have seen questions on MATLAB Answers where the technical reasoning is clearly correct but the phrasing makes it hard to follow. The person knew exactly what they meant. The platform just did not help them say it clearly.

I want to share a few ideas around this. They are not fully formed proposals but I think the direction is worth discussing, especially given how much AI tooling MathWorks has built recently.

What the platform has today

When you write a post in Discussions or an answer in MATLAB Answers, the editor gives you basic formatting options. Code blocks, some text styling, that is mostly it. The AI Chat Playground exists as a separate tool, and MATLAB Copilot landed in R2025a for the desktop. But none of that is inside the editor where people actually write community content.

Four things are missing that I think would make a real difference.

Grammar and clarity checking before you post

Not a forced rewrite. Just an optional Check My Draft button that highlights unclear sentences or anything that might trip a reader up. The user reviews it, decides what to change, then posts.

What makes this different from plugging in Grammarly is that a general-purpose tool does not know that readtable is a MATLAB function. It does not know that NaN, inf, or linspace are not errors. A MATLAB-aware checker could flag things that generic tools miss, like someone writing readTable instead of readtable in a solution post.

The llms-with-matlab package already exists on GitHub. Something like this could be built on top of it with a prompt that includes MATLAB function vocabulary as context. That is not a large lift given what is already there.

Translation support

MATLAB Central already has a Japanese-language Discussions channel. That tells you something about the community. The platform is global but most of the technical content is in English, and there is a real gap there.

Two options that would help without being intrusive:

- Write in your language, click Translate, review the English version, then post. The user is still responsible for what goes live.

- A per-post Translate button so readers can view content in a language they are more comfortable with, without changing what is stored on the platform.

A student who has the right answer to a MATLAB Answers question might not post it because they are not confident writing in English. Translation support changes that. The community gets the answer and the contributor gets the credit.

In-editor code suggestions

When someone writes a solution post they usually write the code somewhere else, test it, copy it, paste it, and format it manually. An in-editor assistant that generates a starting scaffold from a plain-text description would cut that loop down.

The key word is scaffold, not a finished answer. The label should say something like AI-generated draft, verify before posting so it is clear the person writing is still accountable. MATLAB Copilot already does something close to this inside the desktop editor. Bringing a lighter version of it into the community editor feels like a natural extension of what already exists.

A note on feasibility

These ideas are not asking for something from scratch. MathWorks already has llms-with-matlab, the MCP Core Server, and MATLAB Copilot as infrastructure. Grammar checking and translation are well-solved problems at the API level. The MATLAB-specific vocabulary awareness is the part worth investing in. None of it should be on by default. All of it should be opt-in and clearly labeled when it runs.

One more thing: diagrams in posts

Right now the only way to include a diagram in a post is to make it externally and upload an image. A lightweight drag-and-drop diagram tool inside the editor would let people show a process or structure quickly without leaving the platform. Nothing complex, just boxes and arrows. For technical explanations it is often faster to draw than to write three paragraphs.

What I am curious about

I am a Data Science student at CU Boulder and an active MATLAB user. These ideas came up while using the platform, not from a product roadmap. I do not know what is already being discussed internally at MathWorks, so it is entirely possible some of this is in progress.

Has anyone else run into the same friction points when writing on MATLAB Central? And for anyone at MathWorks who works on the community platform, is the editor something that gets investment alongside the product tools?

Happy to hear where I am wrong on the feasibility side too.

AI assisted with grammar and framing. All ideas and editorial decisions are my own.

🚀 Unlock Smarter Control Design with AI

What if AI could help you design better controllers—faster and with confidence?

In this session, Naren Srivaths Raman and Arkadiy Turevskiy (MathWorks) show how control engineers are using MATLAB and Simulink to integrate AI into real-world control design and implementation.

You’ll see how AI is being applied to:

🧠 Advanced plant modeling using nonlinear system identification and reduced order modeling

📡 Virtual sensors and anomaly detection to estimate hard-to-measure signals

🎯 Datadriven control design, including nonlinear MPC with neural statespace models and reinforcement learning

⚡ Productivity gains with generative AI, powered by MATLAB Copilot

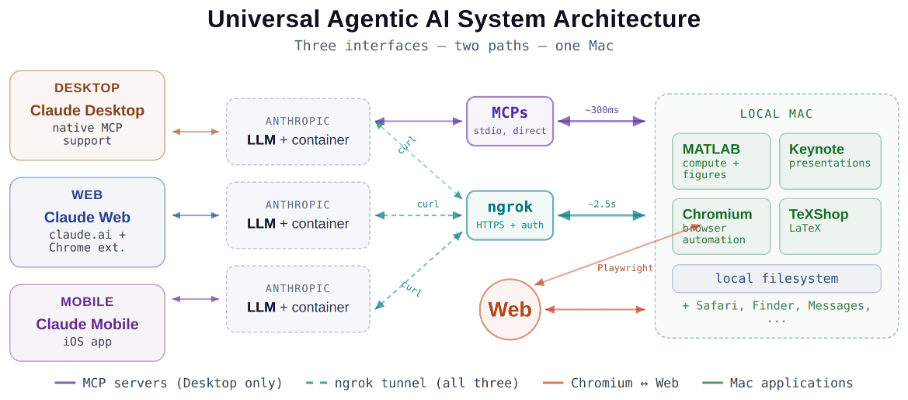

Giving All Your Claudes the Keys to Everything

Introduction

This started as a plumbing problem. I wanted to move files between Claude’s cloud container and my Mac faster than base64 encoding allows. What I ended up building was something more interesting: a way for every Claude – Desktop, web browser, iPhone – to control my entire computing environment with natural language. MATLAB, Keynote, LaTeX, Chrome, Safari, my file system, text-to-speech. All of it, from any device, through a 200-line Python server and a free tunnel.

It isn’t quite the ideal universal remote. Web and iPhone Claude do not yet have the agentic Playwright-based web automation capabilities available to Claude desktop via MCP. A possible solution may be explored in a later post.

Background information

The background here is a series of experiments I’ve been running on giving AI desktop apps direct access to local tools. See How to set up and use AI Desktop Apps with MATLAB and MCP servers which covers the initial MCP setup, A universal agentic AI for your laptop and beyond which describes what Desktop Claude can do once you give it the keys, and Web automation with Claude, MATLAB, Chromium, and Playwright which describes a supercharged browser assistant. This post is about giving such keys to remote Claude interfaces.

Through the Model Context Protocol (MCP), Claude Desktop on my Mac can run MATLAB code, read and write files, execute shell commands, control applications via AppleScript, automate browsers with Playwright, control iPhone apps, and take screenshots. These powers have been limited to the desktop Claude app – the one running on the machine with the MCP servers. My iPhone Claude app and a Claude chat launched in a browser interface each have a Linux container somewhere in Anthropic’s cloud. The container can reach the public internet. Until now, my Mac sat behind a home router with no public IP.

An HTTP server MCP replacement

A solution is a small HTTP server on the Mac. Use ngrok (free tier) to give the Mac a public URL. Then anything with internet access – including any Claude’s cloud container – can reach the Mac via curl.

iPhone/Web Claude -> bash_tool: curl https://public-url/endpoint

-> Internet -> ngrok tunnel -> Mac localhost:8765

-> Python command server -> MATLAB / AppleScript / filesystem

The server is about 200 lines of Python using only the standard library. Two files, one terminal command to start. ngrok provides HTTPS and HTTP Basic Auth with a single command-line flag.

The complete implementation consists of three files:

claude_command_server.py (~200 lines): A Python HTTP server using http.server from the standard library. It implements BaseHTTPRequestHandler with do_GET and do_POST methods dispatching to endpoint handlers. Shell commands are executed via subprocess.run with timeout protection. File paths are validated against allowed roots using os.path.realpath to prevent directory traversal attacks.

start_claude_server.sh (~20 lines): A Bash script that starts the Python server as a background process, then starts ngrok in the foreground. A trap handler ensures both processes are killed on Ctrl+C.

iphone_matlab_watcher.m (~60 lines): MATLAB timer function that polls for command files every second.

The server exposes six GET and six POST endpoints. The GET endpoints thus far handle retrieval: /ping for health checks, /list to enumerate files in a transfer directory, /files/<n> to download a file from that directory, /read/<path> to read any file under allowed directories, and / which returns the endpoint listing itself. The POST endpoints handle execution and writing: /shell runs an allowlisted shell command, /osascript runs AppleScript, /matlab evaluates MATLAB code through a file-watcher mechanism, /screenshot captures the screen and returns a compressed JPEG, /write writes content to a file, and /upload/<n> accepts binary uploads.

File access is sandboxed by endpoint. The /read/ endpoint restricts reads to ~/Documents, ~/Downloads, and ~/Desktop and their subfolders. The /write and /upload endpoints restrict writes to ~/Documents/MATLAB and ~/Downloads. Path traversal is validated – .. sequences are rejected.

The /shell endpoint restricts commands to an explicit allowlist: open, ls, cat, head, tail, cp, mv, mkdir, touch, find, grep, wc, file, date, which, ps, screencapture, sips, convert, zip, unzip, pbcopy, pbpaste, say, mdfind, and curl. No rm, no sudo, no arbitrary execution. Content creation goes through /write.

Two endpoints have no path restrictions: /osascript and /matlab. The /osascript endpoint passes its payload to macOS’s osascript command, which executes AppleScript – Apple’s scripting language for controlling applications via inter-process Apple Events. An AppleScript can open, close, or manipulate any application, read or write any file the user account can access, and execute arbitrary shell commands via “do shell script”. The /matlab endpoint evaluates arbitrary MATLAB code in a persistent session. Both are as powerful as the user account itself. This is deliberate – these are the endpoints that make the server useful for controlling applications. The security boundary is authentication at the tunnel, not restriction at the endpoint.

The MATLAB Workaround

MATLAB on macOS doesn’t expose an AppleScript interface, and the MCP MATLAB tool uses a stdio connection available only from Desktop. So how does iPhone Claude run MATLAB?

An answer is a file-based polling mechanism. A MATLAB timer function checks a designated folder every second for new command files. The remote Claude writes a .m file via the server, MATLAB executes it, writes output to a text file, and sets a completion flag. The round trip is about 2.5 seconds including up to 1 second of polling latency. (This could be reduced by shortening the polling interval or replacing it with Java’s WatchService for near-instant file detection.)

This method provides full MATLAB access from a phone or web Claude, with your local file system available, under AI control. MATLAB Mobile and MATLAB Online do not offer agentic AI access.

Alternate approaches

I’ve used Tailscale Funnel as an alternative tunnel to manually control my MacBook from iPhone but you can’t install Tailscale inside Anthropic’s container. An alternative approach would replace both the local MCP servers and the command server with a single remote MCP server running on the Mac, exposed via ngrok and registered as a custom connector in claude.ai. This would give all three Claude interfaces native MCP tool access including Playwright or Puppeteer with comparable latency but I’ve not tried this.

Security

Exposing a command server to the internet raises questions. The security model has several layers. ngrok handles authentication – every request needs a valid password. Shell commands are restricted to an allowlist (ls, cat, cp, screencapture – no rm, no arbitrary execution). File operations are sandboxed to specific directory trees with path traversal validation. The server binds to localhost, unreachable without the tunnel. And you only run it when you need it.

The AppleScript and MATLAB endpoints are relatively unrestricted in my setup, giving Claude Desktop significant capabilities via MCP. Extending them through an authenticated tunnel is a trust decision.

Initial test results

I ran an identical three-step benchmark from each Claude interface: get a Mac timestamp via AppleScript, compute eigenvalues of a 10x10 magic square in MATLAB, and capture a screenshot. Same Mac, same server, same operations.

| Step | Desktop (MCP) | Web Claude | iPhone Claude |

|------|:------------:|:----------:|:-------------:|

| AppleScript timestamp | ~5ms | 626ms | 623ms |

| MATLAB eig(magic(10)) | 108ms | 833ms | 1,451ms |

| Screenshot capture | ~200ms | 1,172ms | 980ms |

| **Total** | **~313ms** | **2,663ms** | **3,078ms** |

The network overhead is consistent: web and iPhone both add about 620ms per call (the ngrok round trip through residential internet). Desktop MCP has no network overhead at all. The MATLAB variance between web (833ms) and iPhone (1,451ms) is mostly the file-watcher polling jitter – up to a second of random latency depending on where in the polling cycle the command arrives.

All three Claudes got the correct eigenvalues. All three controlled the Mac. The slowest total was 3 seconds for three separate operations from a phone. Desktop is 10x faster, but for “I’m on my phone and need to run something” – 3 seconds is fine.

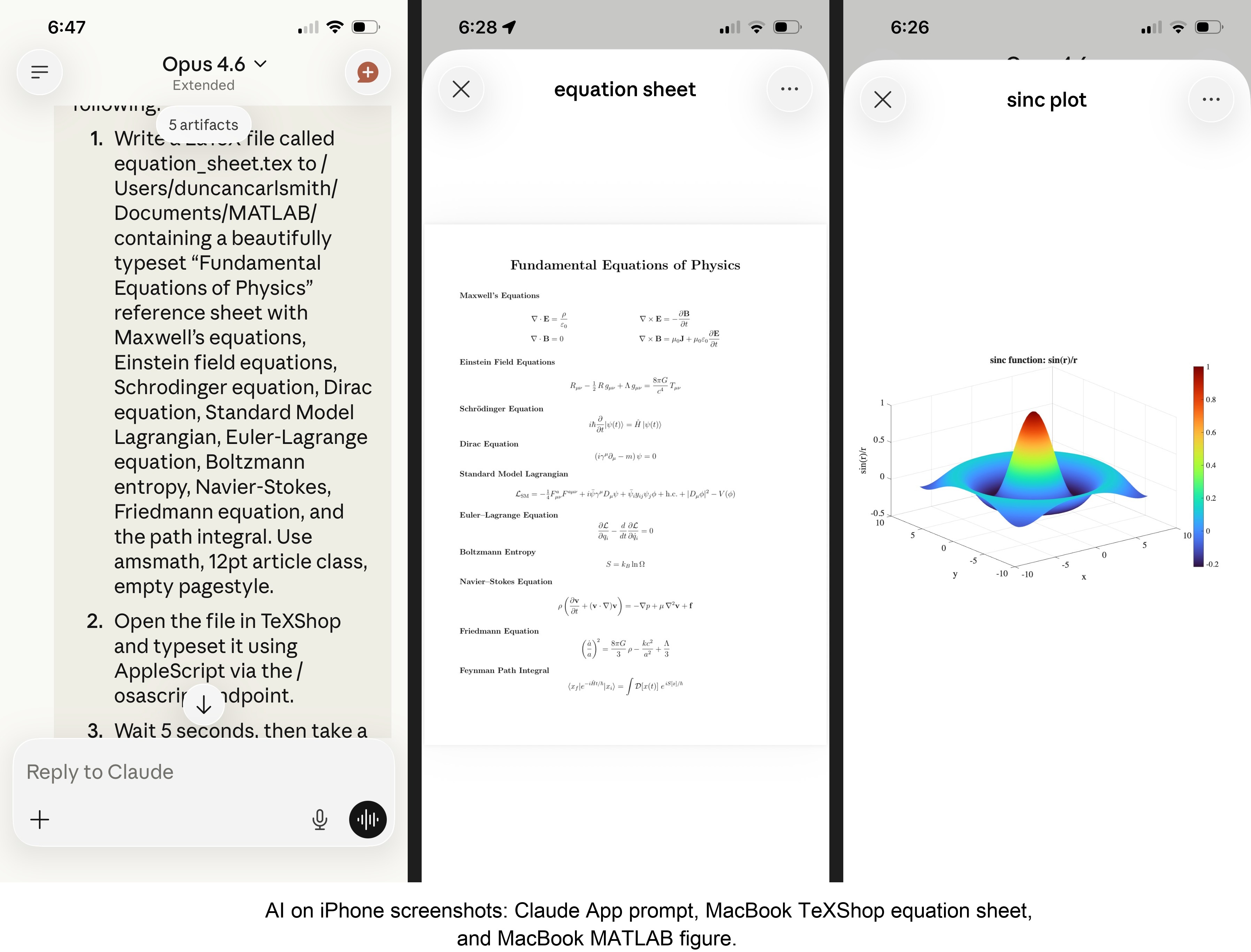

Other tests

MATLAB: Computations (determinants, eigenvalues), 3D figure generation, image compression. The complete pipeline – compute on Mac, transfer figure to cloud, process with Python, send back – runs in under 5 seconds. This was previously impossible; there was no reasonable mechanism to move a binary file from the container to the Mac.

Keynote: Built multi-slide presentations via AppleScript, screenshotted the results.

TeXShop: Wrote a .tex file containing ten fundamental physics equations (Maxwell through the path integral), opened it in TeXShop, typeset via AppleScript, captured the rendered PDF. Publication-quality typesetting from a phone. I don’t know who needs this at 11 PM on a Sunday, but apparently I do.

Safari: Launched URLs on the Mac from 2,000 miles away. Or 6 feet. The internet doesn’t care.

Finder: Directory listings, file operations, the usual filesystem work.

Text-to-speech: Mac spoke “Hello from iPhone” and “Web Claude is alive” on command. Silly but satisfying proof of concept.

File Transfer: The Original Problem, Solved

Remember, this started because I wanted faster file transfers for desktop Claude. Compare:

| Method | 1 MB file | 5 MB file | Limit |

|--------|-----------|-----------|-------|

| Old (base64 in context) | painful | impossible | ~5 KB |

| Command server upload | 455ms | ~3.6s | tested to 5 MB+ |

| Command server download | 1,404ms | ~7s | tested to 5 MB+ |

The improvement is the difference between “doesn’t work” and “works in seconds.”

Cross-Device Memory

A nice bonus: iPhone and web Claudes can search Desktop conversations using the same memory tools. Context established in a Desktop session – including the server password – can be retrieved from a phone conversation. You do have to ask explicitly (“search my past conversations for the command server”) or insert information into the persistent context using settings rather than assuming Claude will look on its own.

Web Claudes

The most surprising result was web Claude. I expected it to work – same container infrastructure, same bash_tool, same curl. But “expected to work” and “just worked, first try, no special setup” are different things. I opened claude.ai in a browser, gave it the server URL and password, and it immediately ran MATLAB, took screenshots, and made my Mac speak. No configuration, no troubleshooting, no “the endpoint format is wrong” iterations. If you’re logged into claude.ai and the server is running, you have full Mac access from any browser on any device.

What This Means

MCP gave Desktop Claude the keys to my Mac. A 200-line server and a free tunnel duplicated those keys for every other Claude I use. Three interfaces, one Mac, all the same applications, all controlled in natural language.

Additional information

An appendix gives Claude’s detailed description of communications. Codes and instructions are available at the MATLAB File Exchange: Giving All Your Claudes the Keys to Everything.

Acknowledgments and disclaimer

The problem of file transfer was identified by the author. Claude suggested and implemented the method, and the author suggested the generalization to accommodate remote Claudes. This submission was created with Claude assistance. The author has no financial interest in Anthropic or MathWorks.

—————

Appendix: Architecture Comparison

Path A: Desktop Claude with a Local MCP Server

When you type a message in the Desktop Claude app, the Electron app sends it to Anthropic’s API over HTTPS. The LLM processes the message and decides it needs a tool – say, browser_navigate from Playwright. It returns a tool_use block with the tool name and arguments. That block comes back to the Desktop app over the same HTTPS connection.

Here is where the local MCP path kicks in. At startup, the Desktop app read claude_desktop_config.json, found each MCP server entry, and spawned it as a child process on your Mac. It performed a JSON-RPC initialize handshake with each one, then called tools/list to get the tool catalog – names, descriptions, parameter schemas. All those tools were merged and sent to Anthropic with your message, so the LLM knows what’s available.

When the tool_use block arrives, the Desktop app looks up which child process owns that tool and writes a JSON-RPC message to that process’s stdin. The MCP server (Playwright, MATLAB, whatever) reads the request, does the work locally, and writes the result back to stdout as JSON-RPC. The Desktop app reads the result, sends it back to Anthropic’s API, the LLM generates the final text response, and you see it.

The Desktop app is really just a process manager and JSON-RPC router. The config file is a registry of servers to spawn. You can plug in as many as you want – each is an independent child process advertising its own tools. The Desktop app doesn’t care what they do internally. The MCP execution itself adds almost no latency because it’s entirely local, process-to-process on your Mac. The time you perceive is dominated by the two HTTPS round-trips to Anthropic, which all three Claude interfaces share equally.

Path B: iPhone Claude with the Command Server

When you type a message in the Claude iOS app, the app sends it to Anthropic’s API over HTTPS, same as Desktop. The LLM processes the message. It sees bash_tool in its available tools – provided by Anthropic’s container infrastructure, not by any MCP server. It decides it needs to run a curl command and returns a tool_use block for bash_tool.

Here, the path diverges completely from Desktop. There is no iPhone app involvement in tool execution. Anthropic routes the tool_use to a Linux container running in Anthropic’s cloud, assigned to your conversation. This container is the “computer” that iPhone Claude has access to. The container runs the curl command – a real Linux process in Anthropic’s data center.

The curl request goes out over the internet to ngrok’s servers. ngrok forwards it through its persistent tunnel to the claude_command_server.py process running on localhost on your Mac. The command server authenticates the request (basic auth), then executes the requested operation – in this case, running an AppleScript via subprocess.run(['osascript', ...]). macOS receives the Apple Events, launches the target application, and does the work.

The result flows back the same way: osascript returns output to the command server, which packages it as JSON and sends the HTTP response back through the ngrok tunnel, through ngrok’s servers, back to the container’s curl process. bash_tool captures the output. The container sends the tool result back to Anthropic’s API. The LLM generates the text response, and the iPhone app displays it.

The iPhone app is a thin chat client. It never touches tool execution – it only sends and receives chat messages. The container does the curl work, and the command server bridges from Anthropic’s cloud to your Mac. The LLM has to know to use curl with the right URL, credentials, and JSON format. There is no tool discovery, no protocol handshake, no automatic routing. The knowledge of how to reach your Mac is carried entirely in the LLM’s context.

—————

Duncan Carlsmith, Department of Physics, University of Wisconsin-Madison. duncan.carlsmith@wisc.edu